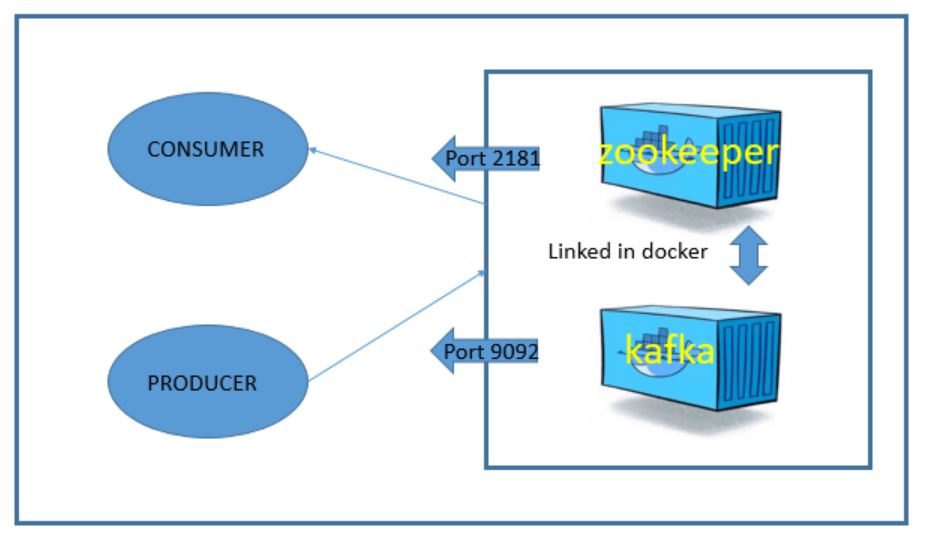

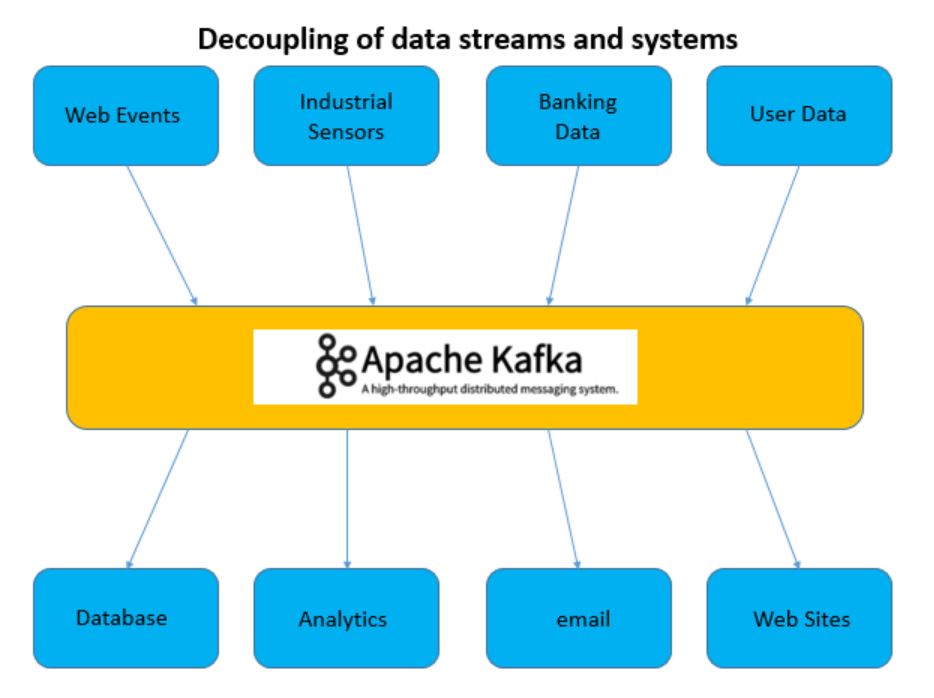

Kafka is a solution to decouple data streams and systems. Instead of having a system that collects data and sends it to many other systems to consume, Kafka provides a solution that lets each ‘thing’ that has data (called a producer) send the information to a ‘topic’ that can be consumed, by something called a consumer, and many consumers can read from a single topic. It looks something like this!

One of the best ways to see Kafka in action is to get it running in your lab! This can be done on any host running a docker machine loaded with docker-compose. Not going to cover that here but I already have a post on this blog over here

Good, you have docker and docker-compose installed but you will need a couple other things like git.

And lets add pip3 so we can pip install!

Almost there. If we are going to build are own producer and consumer in python, will we need to install the librdkafka, enter this!

Now we are ready to get the docker containers and spin up the Kafka environment which will look something like this.

OK, this isn’t difficult at all. It’s super easy to get this up and running. We are going to git clone a repo form my github account. On a linux machine I like to work out of my own personal ‘opt’ directory. So make one for yourself. Navigate to your home directory and do this.

Now change to that directory so we can start the clone! Follow this command!

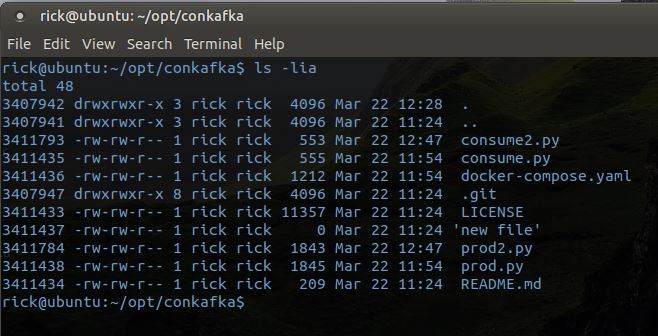

Now if you look, you will see a directory call ‘conkafka’ enter that directory and you will see a few files:

To get the docker lab up simple enter:

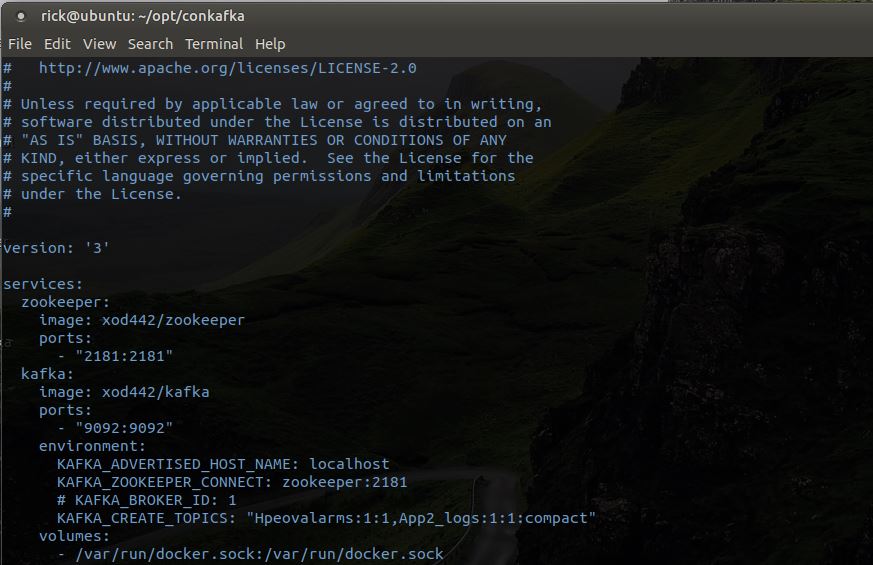

Thats it, you will see docker pulling the containers off of my xod442 dockerhub account. It pulls down the docker images and sets up some connections. There are two containers that will be running, zookeeper and kafka. You can tweak the docker file if you want your own pre-defined kafka topic. Here is what the docker-compose.yaml file looks like.

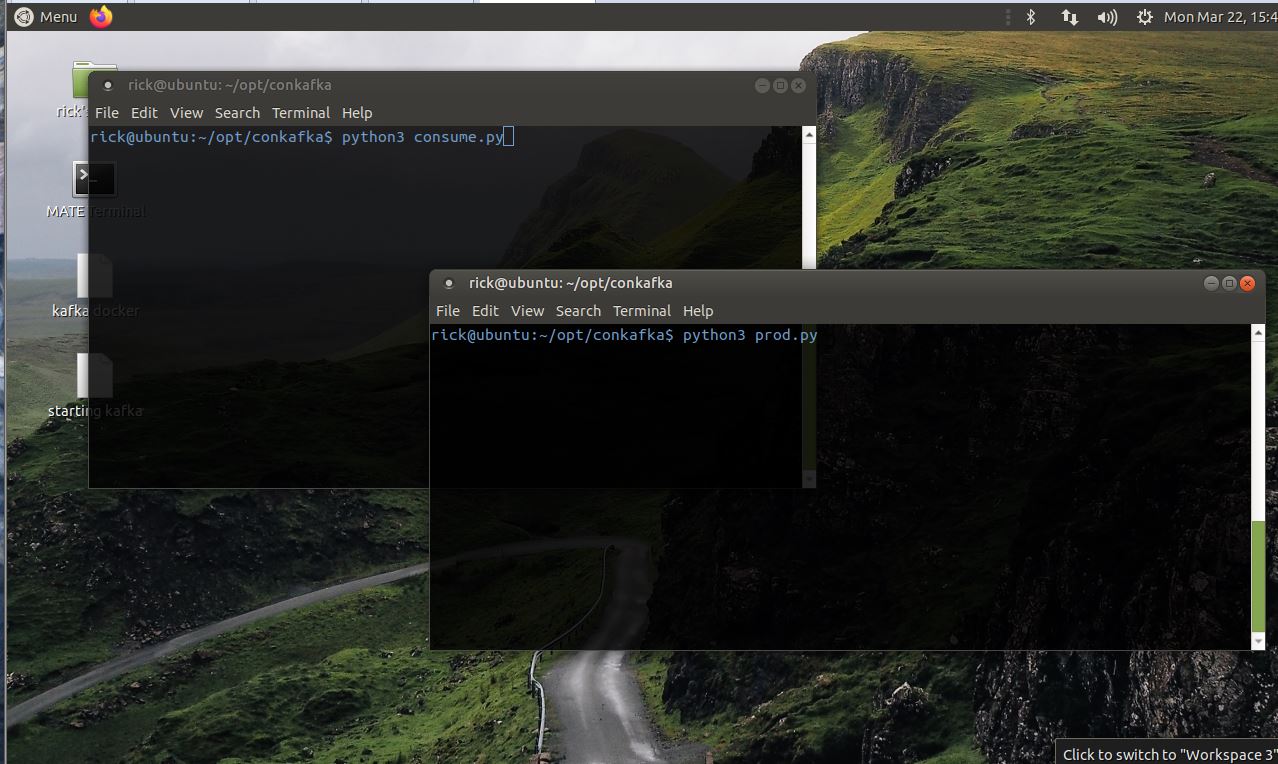

Alright, now we can see kafka in action. Start by opening two terminal windows that are in the conkafka directory. In one window type in this command but do not hit enter.

And in the other type in but do not hit enter the following:

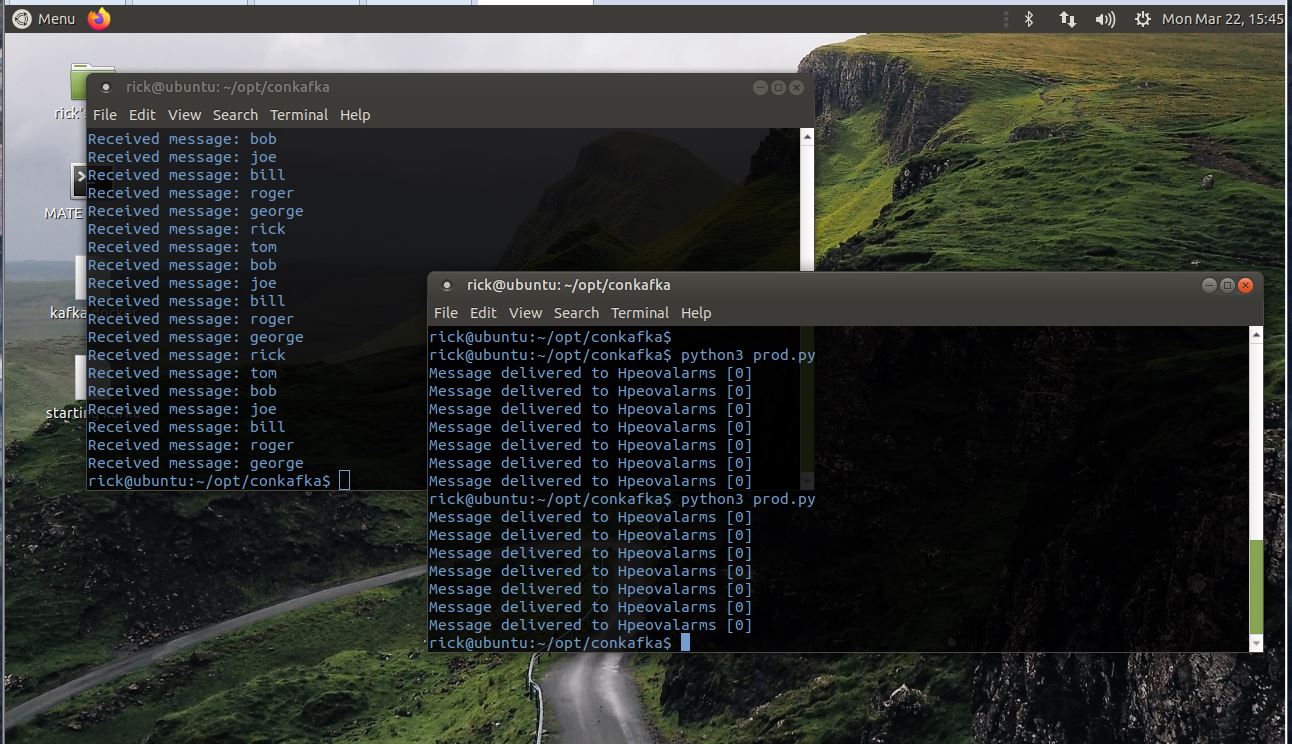

You have have something that looks like this:

Now we are ready to see kafka in action. First hit enter on the consume window. This will start the consumer and it will read from the topic. As soon as you hit enter on the consume (I have it set to time out after 10 seconds), hit enter on the producer. You will see messages sent to the topic and they will appear in the producer window. Hit up arrow and run the prod.py multiple times.

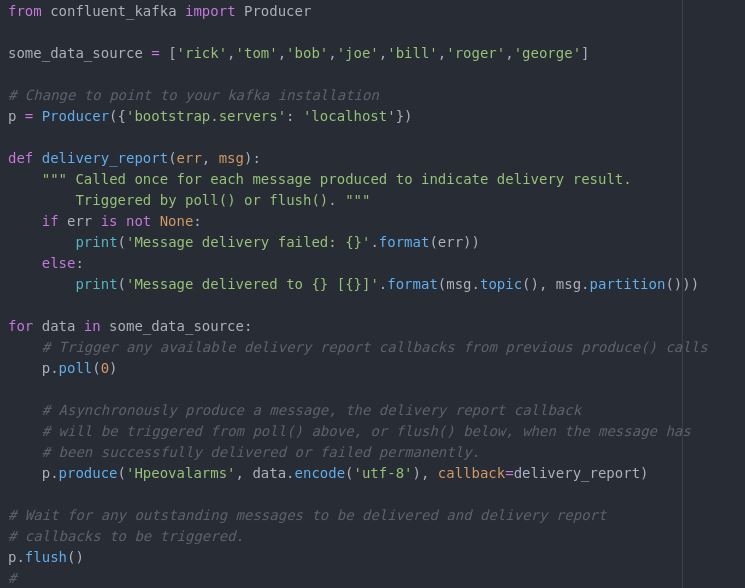

Now let’s take just a quick look at the magic behind this example. It is a couple python scripts. One for the producer and another for the consumers. They look like this: The producer.

At the top of the file there is the import of the python library we use to create a pythonic producer. I added just a simple example of some data to send to the topic.

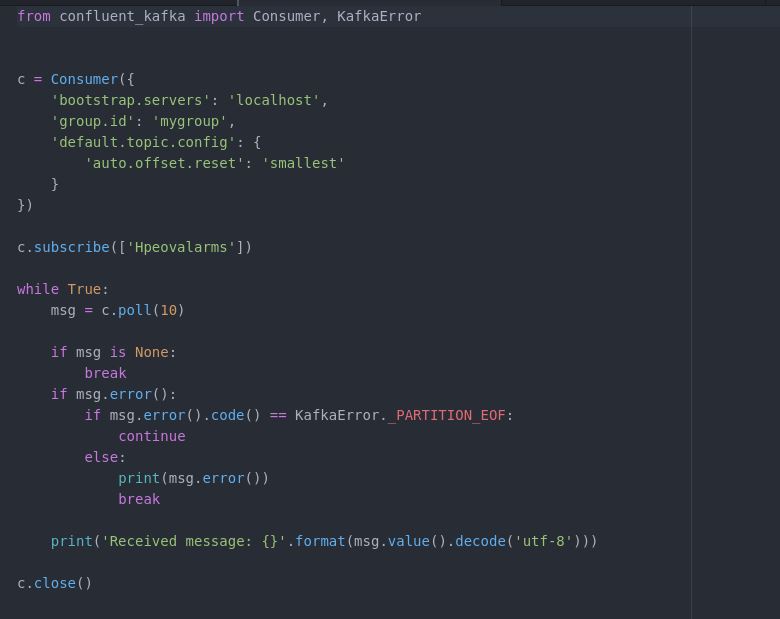

The consumer.

We point the consumer at our kafka server and tell it to subscribe to the topic. Next we set up a loop to keep watching the topic. Like I said, it’s very simple but you can work this easily into a production application.

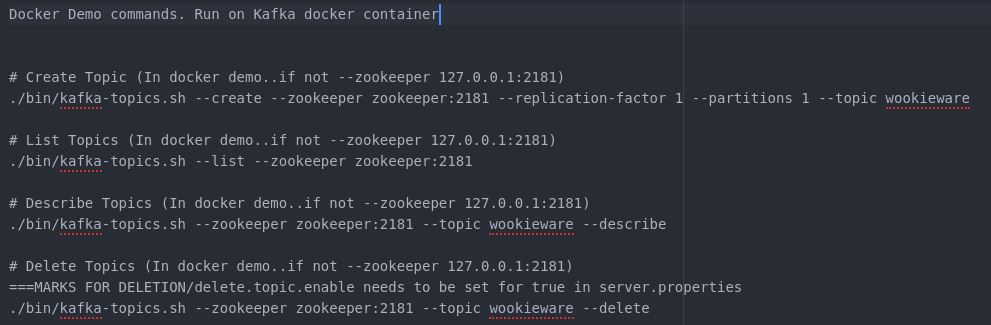

OK, its cool and all but it is just a lab example of a non-production kafka environment. It is just for your joy and education but it is not far from being a production environment. More later. For now, you may want to issue some commands from the kafka server commandline. Here is how you get into the command line of the server.

You should see a simple # prompt, you’re in the kafka container. Change directory to the /opt/kafka folder. All the kafka scripts are in the /bin.

Here is a list of commands you can try.

You should really learn more, Check out this guy over at udemy.com.You got 11 bucks right?